Increase Memory Address Space For Mac10/14/2021

Virtual Memory or page file memory allows the computer to compensate for the physical memory shortage. Abraham Silberschatz, Greg Gagne, and Peter Baer Galvin, "Operating System Concepts, Ninth Edition ", Chapter 8Increase Virtual Memory for Better Memory Management. Thus, address- space layout randomization 19. The actual size of the logical address space, and hence the amount of virtual memory, depends on a number of factors, including the addressing mode currently used by the Memory ManagerThis early form of code reuse, however, relies on gadgets being located at known addresses in memory. The Logical Address Space 3 When virtual memory is present, the logical address space is larger than the physical address space provided by the available RAM.

Increase Memory Address Space How To Increase TheShared memory, virtual memory, the classification of memory as read-only versus read-write, and concepts like copy-on-write forking all further complicate the issue. The advent of multi-tasking OSes compounds the complexity of memory management, because because as processes are swapped in and out of the CPU, so must their code and data be swapped in and out of memory, all at high speeds and without interfering with any other processes. Every instruction has to be fetched from memory before it can be executed, and most instructions involve retrieving data from memory or storing data in memory or both. Obviously memory accesses and memory management are a very important part of modern computer operation. 5 Ways to Check Available Memory in Ubuntu 20.04 posted on April 30. Let’s take a look at how to increase the page file size or the VRAM.You may want to check out more software for Mac, such as VMware Fusion Tech Preview.

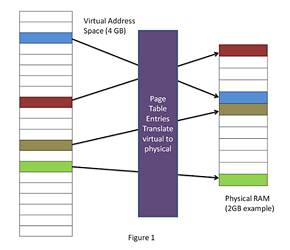

However if the load address changes at some later time, then the program will have to be recompiled. Compile Time - If it is known at compile time where a program will reside in physical memory, then absolute code can be generated by the compiler, containing actual physical addresses. These symbolic names must be mapped or bound to physical memory addresses, which typically occurs in several stages: Execution Time - If a program can be moved around in memory during the course of its execution, then binding must be delayed until execution time. If that starting address changes, then the program must be reloaded but not recompiled. Load Time - If the location at which a program will be loaded is not known at compile time, then the compiler must generate relocatable code, which references addresses relative to the start of the program. The set of all logical addresses used by a program composes the logical address space, and the set of all corresponding physical addresses composes the physical address space. In this case the logical address is also known as a virtual address, and the two terms are used interchangeably by our text. Addresses created at execution time, however, have different logical and physical addresses. Addresses bound at compile time or load time have identical logical and physical addresses. The address generated by the CPU is a logical address, whereas the address actually seen by the memory hardware is a physical address. Figure 8.3 shows the various stages of the binding processes and the units involved in each stage:Figure 8.3 - Multistep processing of a user program 8.1.3 Logical Versus Physical Address Space Only when the address gets sent to the physical memory chips is the physical memory address generated.Figure 8.4 - Dynamic relocation using a relocation register 8.1.4 Dynamic Loading User programs work entirely in logical address space, and any memory references or manipulations are done using purely logical addresses. Note that user programs never see physical addresses. The base register is now termed a relocation register, whose value is added to every memory request at the hardware level. One of the simplest is a modification of the base-register scheme described earlier. The MMU can take on many forms. How to download snes emulator on macThis method saves disk space, because the library routines do not need to be fully included in the executable modules, only the stubs. With dynamic linking, however, only a stub is linked into the executable module, containing references to the actual library module linked in at run time. With static linking library modules get fully included in executable modules, wasting both disk space and main memory usage, because every program that included a certain routine from the library would have to have their own copy of that routine linked into their executable code. The downside is the added complexity and overhead of checking to see if a routine is loaded every time it is called and then then loading it up if it is not already loaded.8.1.5 Dynamic Linking and Shared Libraries The advantage is that unused routines need never be loaded, reducing total memory usage and generating faster program startup times. Version information is maintained in both the program and the DLLs, so that a program can specify a particular version of the DLL if necessary. However if DLLs are used, then as long as the stub doesn't change, the program can be updated merely by loading new versions of the DLLs onto the system. When a program uses a routine from a standard library and the routine changes, then the program must be re-built ( re-linked ) in order to incorporate the changes. An added benefit of dynamically linked libraries ( DLLs, also known as shared libraries or shared objects on UNIX systems ) involves easy upgrades and updates. ( Each process would have their own copy of the data section of the routines, but that may be small relative to the code segments. ) Obviously the OS must manage shared routines in memory. 4k monitors for macIf there is not enough memory available to keep all running processes in memory at the same time, then some processes who are not currently using the CPU may have their memory swapped out to a fast local disk called the backing store. A process must be loaded into memory in order to execute. ( Additional information regarding dynamic linking is available at ) ( Following the UML Proxy Pattern. ) Further calls to the same routine will access the routine directly and not incur the overhead of the stub access. Adding in a latency lag of 8 milliseconds and ignoring head seek time for the moment, and further recognizing that swapping involves moving old data out as well as new data in, the overall transfer time required for this swap is 512 milliseconds, or over half a second. For example, if a user process occupied 10 MB and the transfer rate for the backing store were 40 MB per second, then it would take 1/4 second ( 250 milliseconds ) just to do the data transfer. Swapping is a very slow process compared to other operations. If execution time binding is used, then the processes can be swapped back into any available location.

0 Comments

Leave a Reply.AuthorChristie ArchivesCategories |

RSS Feed

RSS Feed